Every organization today has an AI strategy.

Far fewer have an AI-fluent workforce.

And that gap is quickly becoming the single biggest predictor of whether AI investments pay off or stall.

Here is the uncomfortable reality most leadership teams are not talking about: across most organizations, AI adoption is unmanaged and uneven. There is no shared language, no common standard, and no way to know who is using AI well, who is using it recklessly, and who is not using it at all.

A 2026 BCG study highlights a patchwork of practices that fragments people into groups, including the "Cautious Skeptic", who are "wary of AI’s reliability and its implications for their expertise and job identity."

Without intervention, this fragmentation compounds over time. The gap between the fluent and the non-fluent grows, making it harder to standardize processes, ensure quality, and manage risk. This is not a stable situation. It is an active threat to operational integrity and competitive posture.

The data confirms the urgency.

According to Deloitte’s 2026 State of AI in the Enterprise report, 53% of organizations now cite “educating the broader workforce to raise overall AI fluency” as the number-one way they’re adjusting their AI talent strategies. Yet only 34% of surveyed organizations are truly reimagining their businesses around AI, while the rest remain stuck optimizing what already exists.

McKinsey’s research tells an even sharper story: demand for AI fluency in the workforce jumped nearly sevenfold between 2023 and mid-2025. AI fluency is now a listed requirement in job postings covering approximately seven million US workers, and that number is accelerating.

The message is clear. AI tools are available to everyone. The organizations that win will be the ones that turn this chaos into capability, with a structural backbone that makes AI fluency measurable, manageable, and aligned to strategic ambition.

This guide breaks down exactly what AI fluency means, how to measure it, what leading companies are doing to build it, and how to design your own fluency strategy.

What Is AI Fluency?

AI fluency is the ability to work effectively, efficiently, ethically, and safely with AI systems. It goes well beyond AI literacy, which is simply understanding what AI is. Fluency means knowing how to collaborate with AI tools in your actual job, evaluating outputs critically, iterating on results, and understanding the boundaries of what AI can and cannot do.

AI fluency is the ability to work effectively, efficiently, ethically, and safely with AI systems.

Critically, AI fluency must be rooted in observable behavior, not attitudes.

It is not measured by how people think or feel about AI, but by what they actually do with the tools day to day:

- Can they use approved tools safely?

- Do they verify AI outputs?

- Have they integrated AI into recurring workflows?

- Are they coaching others?

These are behavioral markers that separate organizations that are genuinely building capability from those that are running awareness campaigns and hoping for the best.

Think of the difference between knowing that a language exists and being able to hold a conversation in it.

AI literacy is the first; AI fluency is the second. A fluent employee doesn’t just know that ChatGPT exists. They use AI tools daily to draft, analyze, automate, and make better decisions, all while understanding the risks of hallucination, data privacy, and over-reliance.

Anthropic, the maker of Claude, recently partnered with academic experts to launch a dedicated AI Fluency course built around what they call the “4D Model”: Delegate, Describe, Discern, and be Diligent.

This framework captures the practical skills that separate someone who dabbles with AI from someone who is genuinely fluent: knowing what to hand off to AI, how to communicate with it, how to evaluate its output, and how to maintain quality standards throughout.

AI Fluency vs. AI Literacy: Why the Distinction Matters

Many corporate training programs focus on AI literacy, offering workshops that explain what large language models are, how machine learning works, and what generative AI can do. That knowledge is valuable, but it does not change behavior.

AI fluency changes behavior.

A literate employee understands that AI can summarize documents. A fluent employee has AI embedded into their weekly reporting workflow, saving hours every week without anyone telling them to do it.

The distinction matters because organizations that stop at literacy consistently report low adoption rates. Research from late 2025 found that companies launching standalone AI tools saw adoption plateau at 30–40%, while companies embedding AI into existing workflows achieved 60–80% adoption.

Fluency is what bridges the gap.

Companies Getting AI Fluency Right: How Zapier Moved from “Code Red” to 97% AI Adoption

Zapier’s AI transformation is perhaps the most striking case study of AI fluency driving company-wide adoption. In March 2023, CEO Wade Foster issued an internal “Code Red,” declaring that AI would fundamentally change their business.

Two years later, 97% of Zapier’s 800-plus employees actively use AI in their daily work.

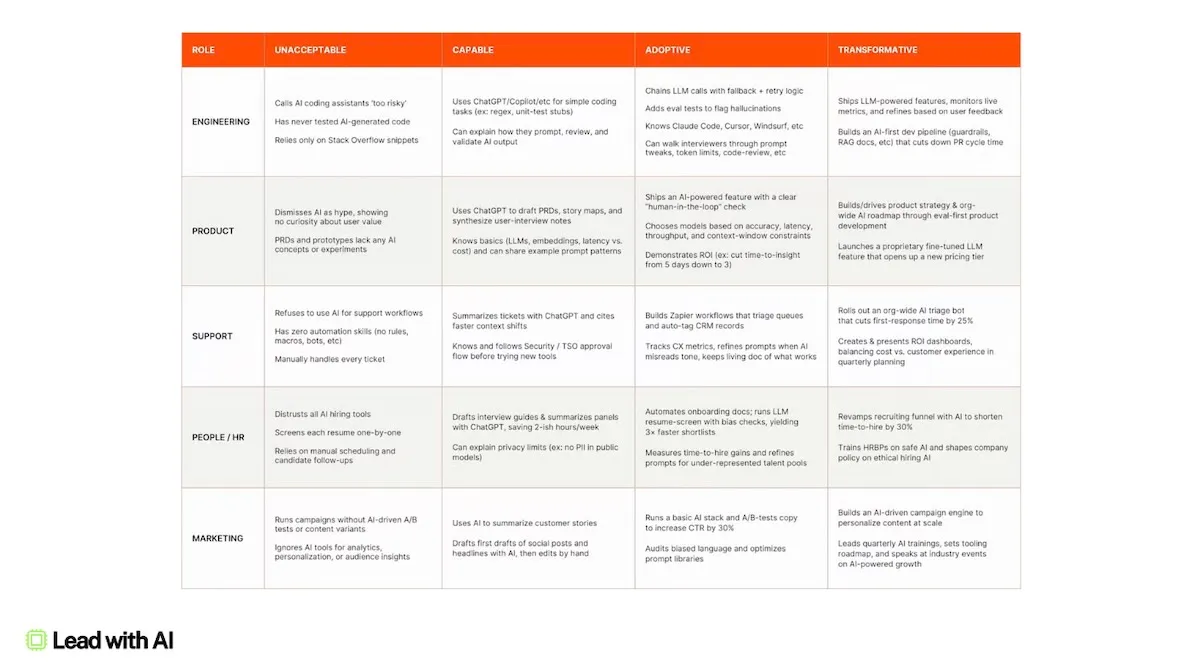

A critical part of how they got there was developing a four-tier AI fluency framework, built first as a hiring tool and then applied company-wide.

The levels range from “Unacceptable” (actively resistant to AI) to “Capable” (basic tool usage) to “Adoptive” (AI embedded in personal workflows) to “Transformative” (rethinking strategy and delivering new value using AI).

Zapier now requires 100% of new hires to demonstrate AI fluency, with tiered assessments tailored by role: entry-level roles are tested on basic tool proficiency while senior roles face evaluation on workflow integration and strategic thinking.

Their approach combined top-down urgency with bottom-up experimentation.

Leadership set the direction, but adoption spread through internal Slack channels, builder sessions at team retreats, weekly demos, and peer learning. Their Support team built a ticket summarizer that cut average handle time in half.

Their People team built tools for onboarding and performance coaching without writing a line of code.

As CPO Brandon Sammut put it, Zapier’s HR team spent five weeks in the spring of 2025 implementing AI fluency standards so they could evaluate every candidate equally. The company has since open-sourced its playbook to help other organizations follow the same path.

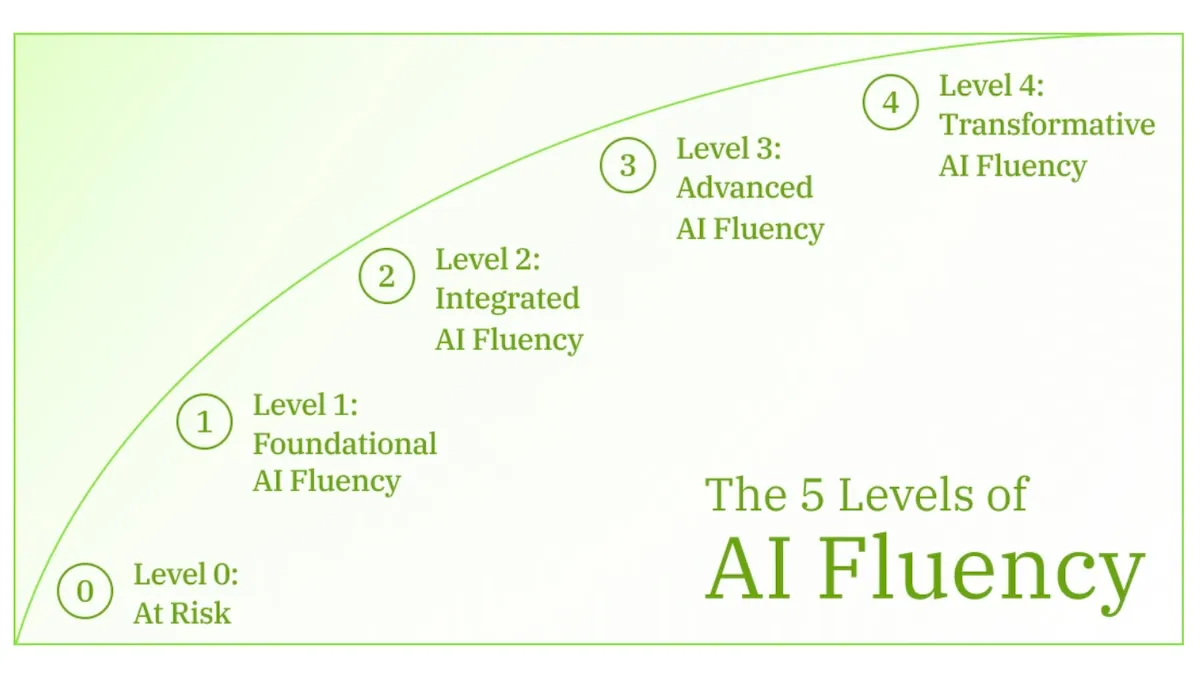

The Five Levels of AI Fluency

If you haven't introduced an AI Fluency matrix in your organization, build upon ours.

After working with over 1,000 leaders, we defined five distinct levels that map how individuals and teams progress from non-adoption to organizational transformation. Here is a neutral breakdown of each level, along with what it looks like in practice:

Level 0: At Risk / Non-Adoption

A foundational insight: lacking AI fluency is not a neutral starting point.

Level 0 represents a state of genuine risk to the business.

At Level 0, employees have not adopted AI at all, or they use it carelessly without understanding guardrails. This is rarely a skills problem. It’s a mindset issue. These individuals may be skeptical about AI, unaware of approved tools, or simply resistant to changing their routines.

Some fall into the “Informal Experimenter” category, using unapproved tools and introducing hidden risks.

Others are outright avoiders, creating capability gaps and organizational drag.

For an organization pursuing an AI-forward strategy, persistent Level 0 status is not just a training question. It is a talent-fit question and a risk management issue that requires active mitigation.

Level 1: Foundational Fluency

Level 1 is where real value begins.

A Level 1 employee uses AI as a responsible individual contributor for straightforward, repeatable tasks: summarizing documents, drafting emails, and preparing initial analysis.

Critically, they understand the guardrails. They know which tools are approved, respect data boundaries, recognize when AI might hallucinate, and know when not to use AI.

This is the “safe pair of hands” level.

The test for leaders: would you trust every person on your team to use AI unsupervised on a real business problem? If not, Level 1 has not been completed.

Level 2: Integrated Fluency

The leap from Level 1 to Level 2 is subtle but powerful.

Level 2 is not about using AI occasionally; it is about embedding AI into recurring workflows.

These employees don’t “decide” to use AI each time because it’s already integrated into weekly reporting, customer analysis, research, and at least five everyday workflows.

Some build simple tools for themselves, like custom GPTs or lightweight automations.

This is where compound productivity emerges: a steady accumulation of time savings, clarity, and focus.

For leaders, Level 2 is personal leverage. You show up better prepared, think more clearly, and waste less energy on low-value work.

Level 3: Advanced Fluency

Level 3 is where AI shifts from personal advantage to team capability.

These leaders stop asking “How can AI help me?” and start asking “How should work be done now?”

They redesign workflows, connect tools, create smart automations, and, critically, they teach.

They coach colleagues, share patterns, and normalize experimentation while enforcing standards.

Most organizations stall here because they confuse Level 3 with Level 4.

They wait for top-down strategy decks when what they actually need are credible internal champions (managed through a Champions Program) who know how work gets done.

Level 4: Transformative Fluency

Level 4 is not for everyone, and it should not be.

These are the enterprise architects: senior leaders who shape the organization’s long-term AI approach to fundamentally transform the business.

They scan for industry disruption, make build-versus-buy decisions, define success metrics, risk thresholds, and governance models.

Their work creates sustainable advantage through scale and coherence.

But here’s the critical warning: Level 4 without Levels 1–3 underneath it is just “AI theater.” You cannot compensate for an unsafe, inconsistent, or underpowered workforce with strategy documents alone.

AI Fluency Is a Portfolio, Not a Ladder

One of the most common mistakes organizations make is treating AI fluency as a single benchmark: push everyone to the highest level possible. This is wrong. The objective is not to turn every employee into a Level 4 AI leader. Value emerges from the system as a whole.

AI fluency drives three distinct modes of value creation, and each level assumes mastery of the ones before it:

- Individual Productivity at Levels 1 and 2 focuses on responsible everyday use and weaving AI into recurring workflows to save time, lift quality, and reduce cognitive load.

- Workflow Redesign and Enablement at Level 3 shifts the focus from personal use to redesigning team processes, building smart automations, and actively coaching colleagues.

- Organizational Transformation and Governance at Level 4 involves enterprise architects shaping the company’s entire AI roadmap, guiding platform investments, and building sustainable competitive advantage.

The right model is a portfolio pyramid.

At the top, a small group of Shapers at Level 4 lead strategy. Below them, a defined cohort of Champions at Level 3 drive adoption and workflow redesign. The Majority of employees operate at a safe, productive baseline at Level 2. And at the bottom, a minimal group sits At Risk at Level 0, requiring active mitigation.

As Brandon Sammut, CHRO at Zapier told us in a members-only interview:

"I don't think everyone in an organization needs to operate at transformative. If you go back to the customer support example, did Lauren need all 80 people on her support team to be operating at that transformative level in order for the team to achieve the results? And the answer to that is no. You would definitely need your pioneers to actually engineer and show the way — that could be two, three, five people on a team of 80. The rest need to be adaptive. They need to be able to adopt the new ways of working and put it to work to produce measurable improvements in their performance."— Brandon Sammut, Chief People Officer, Zapier

The goal is to deliberately design this mix, not to push everyone up a ladder.

What the Research Says About AI Fluency and Business Outcomes

The evidence linking AI fluency to business performance is becoming hard to ignore.

McKinsey’s 2025 State of AI survey found that the single biggest factor affecting whether organizations see EBIT impact from AI is the redesign of workflows, not the technology itself. Organizations with high performers are distinguished by having senior leaders who actively role-model AI use, role-based capability training, and mechanisms for employee feedback. In other words, the human side is what separates value from vaporware.

Deloitte’s 2026 survey of 3,235 global leaders found that while worker access to AI rose by 50% in 2025, the AI skills gap remains the single biggest barrier to integration. Education, not role redesign, was the most common way companies adjusted their talent strategies. But the highest-performing organizations went further: they reimagined jobs to combine human strengths with AI capabilities, creating new roles like AI operations managers and human-AI interaction specialists.

IDC projects that investments in AI solutions will yield a cumulative global impact of $22.3 trillion by 2030, with every new dollar spent on AI generating an additional $4.90 in the broader economy. But that multiplier only materializes when people can actually use the tools.

Perhaps the most revealing data point comes from the 70-20-10 rule that emerged from multiple 2025 studies: 70% of AI transformation investment should go to people and process change, 20% to infrastructure and integration, and 10% to the AI tools themselves. Most companies were allocating these investments in reverse.

AI Fluency Decay: a Dynamic System, Not a Static State

There's a half-life to AI fluency. As tools, models, and interfaces evolve, what counted as fluency six months ago may no longer be sufficient. A one-off training initiative is not enough. We call this AI Fluency decay.

Organizations that treat fluency as a box to check rather than a condition to maintain will find themselves in a state of strategic illusion: leadership believes the workforce is current because training was completed, while the gap between internal operating standards and external reality widens silently.

AI fluency does not decay because people forget what they learned. It decays because the environment redefines what competence means. Four mechanisms drive this:

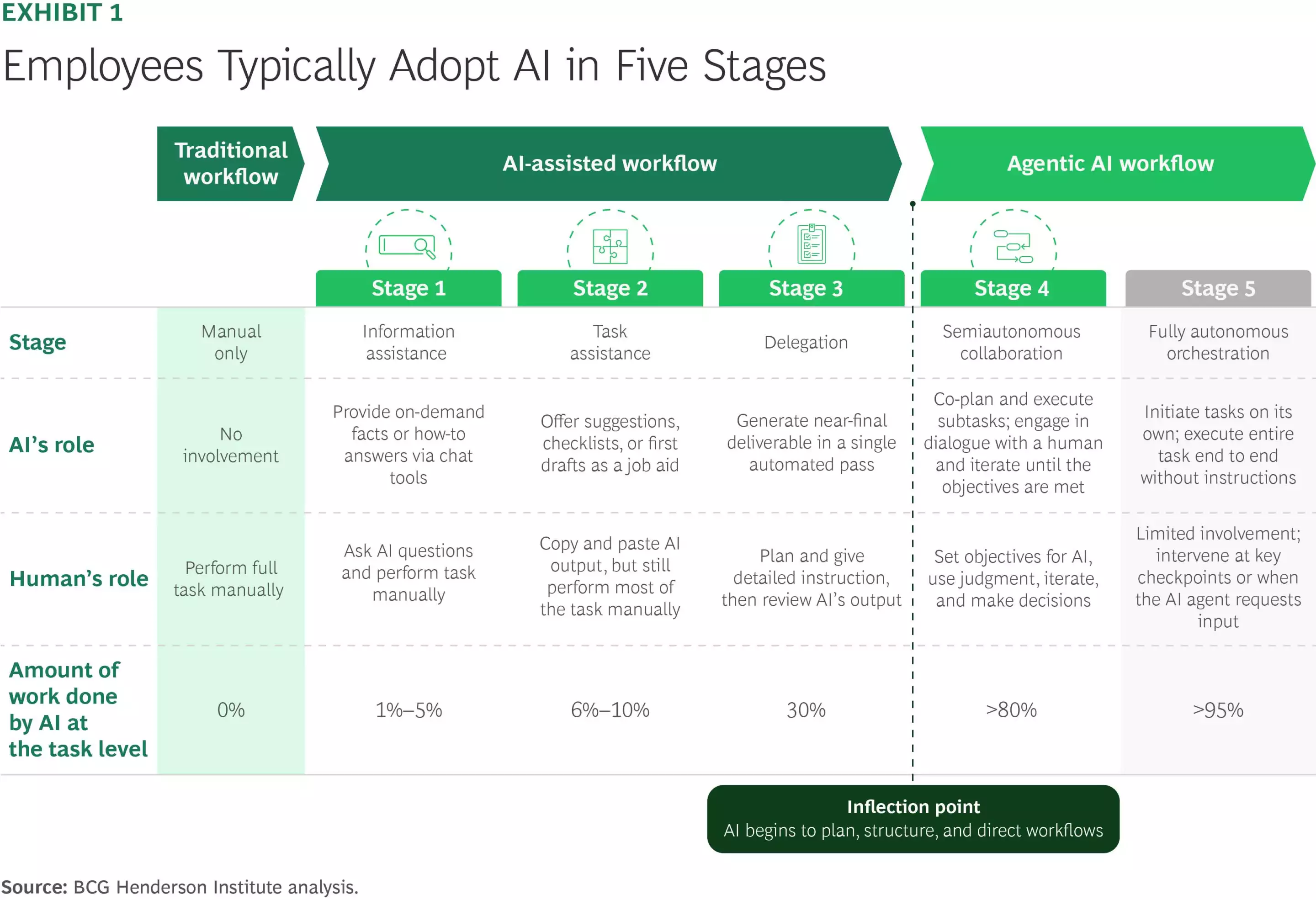

- AI models and platforms now improve almost monthly. And the way to get the most out of them (think ChatGPT-5 vs. 4o) changes with it. New features like Deep Research and Cowork/Agent Mode can give you a competitive edge. But if you don’t actively adjust, you keep using yesterday’s tools in today’s environment.

- What Counts as “Advanced” Keeps Moving. Early on, writing good prompts felt advanced. Now, building assistants is becoming the baseline. And agents are around the corner. So your relative level drops even if you haven’t regressed.

- Workflows Shift, Not Just Features. AI is changing how work should be structured and what we should still spend our scarce human hours on. For example, if you keep using AI as a “smart autocomplete” while others redesign workflows, your fluency will be outdated.

- Confidence Freezes Learning. And the most dangerous part, which we see all the time: People feel fluent, use AI daily, and feel ahead. And exactly that confidence reduces much-needed exploration. So while the environment evolves, their learning slows. A problem that compounds with each new AI upgrade.

The instinct when decay sets in is to train again.

Run another workshop. Update the curriculum. But the problem is structural, not episodic.

One-off training treats competence as a state to be achieved. In a fast-evolving environment, competence is a condition to be maintained. The distinction is the same one that separates cybersecurity awareness from continuous patching, or a pilot’s initial certification from recurring recertification.

The most effective organizations treat fluency as a living system built around a continuous cycle: Assess where people are, Uplift them to the target portfolio mix, and Refresh on a regular cadence, approximately every six months, to prevent capability slippage and introduce new patterns.

How to Build AI Fluency in Your Organization

Step 1: Run an AI Fluency Diagnostic

Before you can build fluency, you need to know where you stand. An AI fluency diagnostic assesses the current capabilities of your workforce across the fluency levels described above. This is not a single survey. It should combine self-assessment, manager evaluation, and observable behaviors, such as whether employees are actually using approved AI tools and how frequently.

Udemy Business, for example, offers a dedicated AI Fluency Assessment that helps organizations understand whether their teams are merely AI-aware or truly AI-enabled, and what to prioritize next based on actual fluency levels. The Lead with AI Fluency Matrix provides another practical starting point for benchmarking.

The diagnostic should answer three questions: Where are our people now? Where do they need to be? And what is the gap by role, team, and function?

Step 2: Define Your Fluency Portfolio by Role

Not everyone needs the same level of fluency. After running your diagnostic, map out the target fluency level for each role category. Individual contributors might need solid Level 1 with exposure to Level 2. Managers and team leads should generally target Level 2. Department heads and senior leaders responsible for workflow design should aim for Level 3. And your executive team should include a small number of Level 4 thinkers who can shape enterprise-wide strategy.

This mapping should be done by function, not just seniority. A marketing analyst might need Level 2 fluency to embed AI into campaign workflows, while a compliance officer might need Level 1 fluency with deep knowledge of AI governance and risk. A technical team lead might need Level 3 to redesign engineering workflows. The portfolio is as much about depth of specialization as it is about breadth of adoption.

Step 3: Build Fluency Into the Flow of Work

The most effective AI training does not happen in a classroom. Microsoft’s financial services research specifically recommends “learning in the flow of work”: embedding skill-building into daily tasks so that capabilities compound over time. This means pairing formal training with practical application: guided prompts within existing tools, weekly team challenges, internal prompt libraries, and peer coaching networks.

Lloyds Banking Group’s “flight instructor” model is a template worth studying. Rather than relying solely on top-down training programs, they cultivated a thousand internal volunteers who helped colleagues build practical skills through weekly hands-on sessions. The result was a 93% daily usage rate, far above the industry average.

Step 4: Measure Outcomes, Not Just Adoption

This is where most organizations fall into strategic illusion. Early AI programs measured adoption rates: how many people logged in, how many prompts were sent. These metrics create a false sense of progress. A team with 90% tool login rates can still be using outdated prompting patterns, ignoring new capabilities, and producing work that competitors’ AI-fluent teams surpassed months ago.

More mature programs measure business outcomes: did cycle times shrink, did decision quality improve, did capacity shift to higher-value work? The shift from “How cool is it?” to “What’s the KPI and where’s the baseline?” is a hallmark of organizations that have moved past the experimentation phase. The most sophisticated organizations measure both efficiency gains and capability development, tracking whether AI is making employees faster and better at the same time.

Step 5: Address Level 0 Directly

Every organization has non-adopters. Some need support: better tools, clearer guidelines, a compelling use case for their specific role. Others are making a deliberate choice not to engage. For organizations pursuing an AI-forward strategy, persistent non-adoption is not just a training gap. It is a strategic misalignment that needs to be addressed through honest conversation about expectations and fit.

The Real Question for Leaders

The question facing leaders today is not “How advanced is our AI strategy?” and it is no longer even “Have we trained our people?” Most organizations have done both.

The question that now separates organizations building durable advantage from those coasting on outdated confidence is this: How quickly does our AI operating standard update relative to the market?

If you cannot answer that with precision, if you do not know where your people’s capabilities sit today versus where the frontier has moved, then your last training program did not make you AI-enabled. It gave you a starting point that is already depreciating.

The technology is no longer the bottleneck. The human capability is. And without a structural backbone to turn chaos into capability, unmanaged adoption will continue to widen the divide between organizations that are genuinely transforming and those that are just buying tools.

An AI Fluency Matrix provides the shared language and structure required to address this challenge. It allows you to treat AI capability as a first-class transformation issue: measurable, manageable, and aligned to your strategic ambition. You will know exactly where you are, where you need to go, and how to get there.

Start with a diagnostic. Define your portfolio. Build fluency where work actually happens. Measure what matters. Refresh every six months. And treat AI fluency not as a one-time training initiative, but as a living system that compounds over time, because the alternative is silent decay.