Most AI rollouts follow the same pattern.

Leadership gets excited, a pilot launches, a handful of enthusiasts love it. And then adoption quietly stalls.

According to research reported in Harvard Business Review, companies in most industries are investing heavily in AI, yet employees experiment with new tools but don't integrate them deeply into how work actually gets done, leaving executives increasingly concerned about ROI.

You'll likely have heard about the 2025 MIT study that found only 5% of enterprise AI systems made it from evaluation to production. The pattern repeats: even when the tools are there, the licenses are paid for, and training has happened, nothing really changes.

Here's what we found: adoption is not a technology problem. It's a people problem. And the organizations that have cracked enterprise-wide AI adoption figured this out early, often including a well-designed AI Champion Program.

What Is an AI Champion Program?

An AI Champion Program is a structured, peer-led network of employees who help their colleagues adopt AI in their day-to-day work.

Champions aren't dedicated trainers or IT specialists. They're people who already understand the work, have built real AI fluency and, crucially, are trusted by the people sitting next to them.

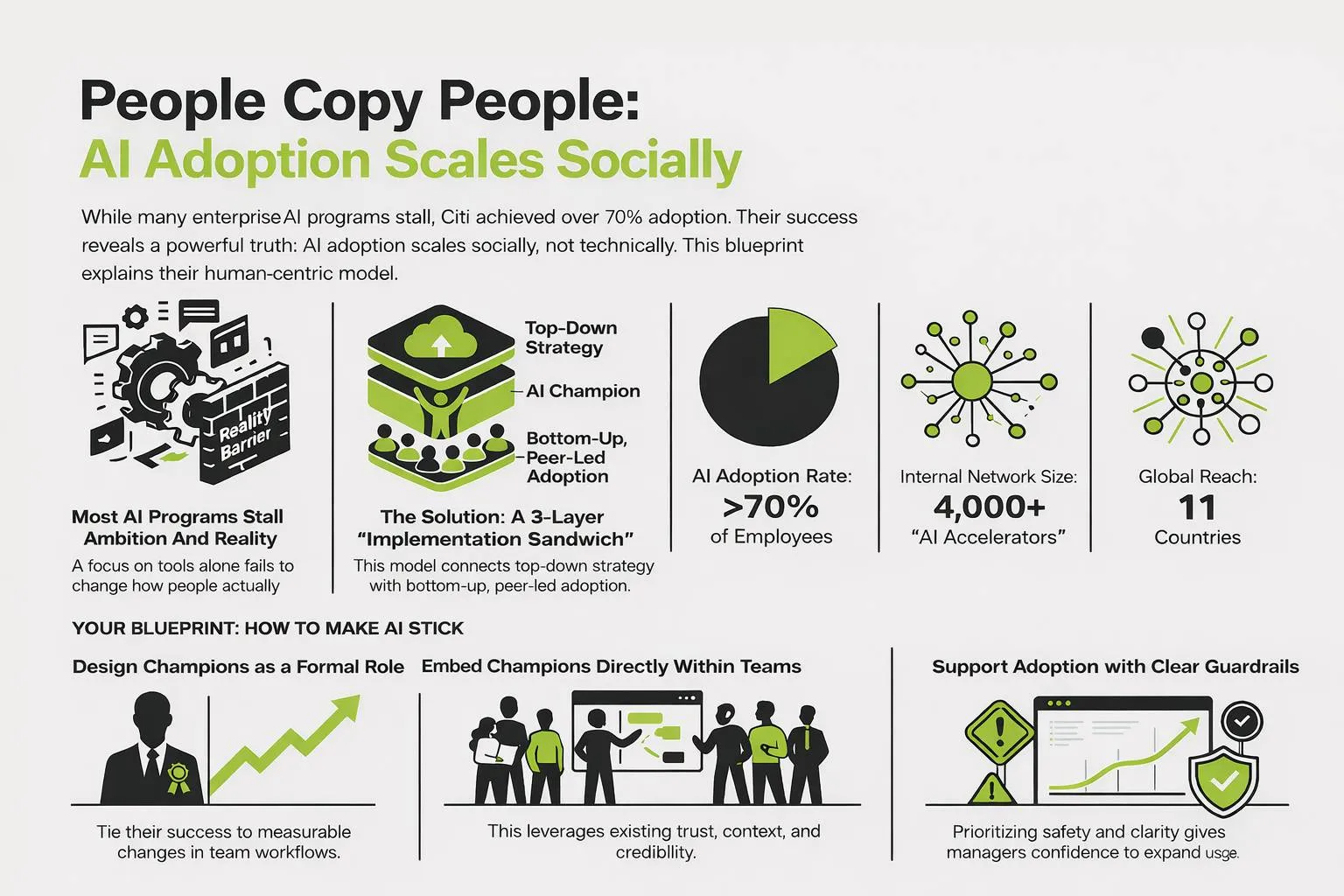

The idea is simple: people copy people. When someone on your immediate team shows you how they used AI to turn a two-hour task into fifteen minutes, that lands differently than any top-down mandate or company-wide training session ever could.

As the OpenAI Academy frames it, Champions don't create change by telling people to use AI. They influence how teams work by making AI easier to see, try, and trust through consistent, grounded actions.

The Companies Getting This Right

Two enterprises we featured make the case clearly.

Citi built an internal network of more than 4,000 AI Accelerators — peer Champions embedded across a global workforce of 182,000 employees in 84 countries — and reached over 70% adoption of firm-approved AI tools. That number would be remarkable for a startup. For a regulated financial institution operating across dozens of countries, it's exceptional. Read our full breakdown of how Citi scaled AI adoption socially →

PwC Netherlands took a phased approach, scaling from an initial group of 300 AI enthusiasts to all 6,000 employees over roughly a year. A key part of their strategy was using organizational network analysis to identify the most naturally influential people in the business — not just the most vocal AI fans — and putting them at the center of the adoption effort. See the full PwC case study →

Neither company got there by deploying better tools. They got there by investing in people.

The Top 5 Questions About AI Champion Programs Answered

If you've started researching Champion Programs, you've probably run into the same questions over and over.

Here are the ones we hear most, with real answers.

1. What does an AI Champion actually do day-to-day?

There's a common misconception that AI Champions run workshops and deliver presentations about AI capabilities. The most effective ones don't. They're not teachers, they're workflow translators.

CSO Online describes Champions as multipliers who translate corporate strategy into team-level behavior, and bring real usage, blockers, and insights from the field back to the center. Their job is to guide, activate, and normalize use of AI across the workforce.

In practice, a Champion typically spends 30 to 60 minutes a week on the role, embedded within their normal job. In that time they're doing things like:

- Showing AI working inside a real task during a team meeting or in a Slack thread

- Helping a colleague who's stuck on a specific use case, in context rather than via a generic course

- Sharing examples of what worked, and what didn't, so others can adapt rather than start from scratch

- Flagging friction points back to central teams so guardrails can be refined

The influence compounds because it travels through trust. One example shared in a team standup often leads to five people experimenting, two of whom adapt it, and one who shares it back out. No coordination required.

2. How do you find the right people to be Champions?

Not every AI enthusiast makes a good Champion. The loudest advocates in the room aren't always the most effective ones.

The most connected people, those others naturally turn to with questions, whose opinions get listened to, who are already helping colleagues informally, are your real targets. PwC Netherlands used organizational network analysis to map exactly this, identifying who had the most natural influence across teams before asking them to take on the role. The results, they found, were "magical" for adoption.

For organizations without a people analytics capability to run that kind of analysis, a simpler approach works well. Look for employees who:

- Already help colleagues informally, without being asked

- Are genuinely curious about improving how work gets done

- Can communicate clearly across different functions and seniority levels

- Come from non-technical backgrounds like finance, operations, or marketing — because they see the real workflows and can spot where AI fits

Cambridge Spark notes that some of the best Champions sit outside the IT function entirely, because their credibility is grounded in the actual work rather than the technology.

3. How do you stop Champions burning out?

This is a real risk, and it's one of the most common reasons Champion Programs quietly fade out after six months.

A few things prevent it. First, set expectations clearly from the start. GitHub's internal playbook recommends framing the role as a "choose your own adventure", positioning the expected time commitment (30–60 minutes a week) not as additional work but as a way to contribute in whatever way feels most natural and useful to them.

Second, create structure so Champions don't have to handle everything themselves. CSO Online recommends a ratio of one Champion lead for every 10–20 Champions, providing coordination and a place to escalate issues. Virtual office hours that rotate between Champions prevent any one person from becoming the default helpdesk for the whole company.

Third, and this is underrated, keep the questions interesting.

PwC's Champions found sustained energy in the more complex, challenging questions that came as adoption matured: how to automate an entire team workflow, how to use AI Studio to reduce manual handoffs, how to think about which tasks should remain human. Those questions are genuinely engaging. Fielding the same "where do I find the button?" query twenty times is not.

Finally, recognition matters more than most leaders expect. Citi used internal badges and visibility to build credibility without turning it into competition. PwC ran a weekly "winner" program in the early stages, publicly celebrating Champion-submitted use cases. Public recognition signals to the organization that this work matters, and it keeps Champions engaged without burning additional budget.

4. How many Champions do you actually need?

A useful benchmark from CSO Online: aim for 5–10% of your initial AI user base to become part of the Champion network, with one Champion lead for every 10–20 Champions.

For a 1,000-person organization, that's somewhere between 50 and 100 Champions. For a 10,000-person organization, it might be 500–1,000 people distributed across functions and geographies.

That said, you don't need to hit those numbers on day one. Citi's network of 4,000 AI Accelerators was built over two years. PwC started with 300 enthusiasts in a company of 6,000. The point is to start with a critical mass that can generate visible peer-to-peer momentum, then scale from there as the program earns credibility.

The ratio that matters most isn't Champions to employees, it's Champions to teams. Every team should be able to reach a Champion without a support ticket. If people have to go outside their immediate working group to find help, the informal influence dynamic breaks down.

5. How do you measure whether a Champion Program is working?

Usage metrics are a starting point, but they're not the whole story. A 2025 Writer survey of 1,600 knowledge workers found that only 45% of employees, versus 75% of the C-suite, believe their organization has successfully adopted AI over the past year. That gap tells you something: activity and genuine adoption are very different things.

The real measure is behavior change. Ask:

- Are teams approaching work differently than they were six months ago?

- Are AI-assisted workflows becoming team norms rather than individual experiments?

- Are Champions surfacing repeatable patterns that others are actually adopting?

- Is the quality or speed of work outputs measurably improving in the teams where Champions are active?

Monitor how many teams are activated, how many use cases are surfaced and adopted, and, where possible, short "reinvestment reports" showing how time saved through AI was redirected to higher-value work. That evidence builds institutional memory and gives executives the context they need to sustain support for the program.

The Structure That Makes It Stick

Champion enthusiasm alone doesn't build a program that lasts. The organizations that see the best results design them with three things in place from the beginning.

Clear governance at the top. Champions need something safe to champion. Citi paired its peer-led network with firm-approved tools, explicit data boundaries, and clear use cases. That structure gave managers the confidence to support AI adoption rather than slow it down. Without it, "shadow AI" fills the gap — and that creates real risk in regulated environments. A 2025 Writer report found that 41% of Millennial and Gen Z employees admit to quietly sabotaging their company's AI strategy when trust in the process breaks down.

Real workflow integration in the middle. Another case study we analyzed, Hubspot, simply "made AI boring" by implementing it into tools employees already used every day: Teams, Outlook, Excel, PowerPoint. They also asked leaders to explicitly encourage teams to run work through AI before submitting it for review. Small nudges, consistently applied, add up. Speaking of the middle, the best organizations run AI transformation as a three-layered sandwich. An "AI Lab" or Center of Excellence can direct and support the Champions initiative.

Recognition that builds culture, not competition. Both Citi and PwC recognized Champions publicly through internal badges, weekly callouts, and prompt libraries that showcased their contributions. That recognition signals to the broader organization that this matters, while keeping the culture collaborative.

How to Get Started

You don't need to start with 4,000 Champions. Nobody does. What you do need from day one is intention.

That means defining what Champions are expected to do and what support they'll get. It means pairing grassroots enthusiasm with enough governance that people feel safe experimenting. And it means treating adoption as a change management challenge, because, as McKinsey's 2025 State of AI report found, most organizations are still navigating the transition from experimentation to scaled deployment, and the highest-performing companies stand out not because of their tools but because they treat AI as a catalyst to transform how work is actually done.

Start small. Find your connectors. Embed them in the work. And measure whether behavior is actually changing.

The tools are ready. The question is whether your people structure is ready to support them.

The organizations winning at AI adoption right now aren't the ones with the biggest budgets or the most advanced models. They're the ones who understood, early, that technology spreads through people — and built accordingly.