Byline: Based on the March 27, 2026 Lead with AI PRO live session with Ethan Smith, Founder and CEO of Graphite. Watch the full recording here.

Most of the advice circulating about Answer Engine Optimization is built on short, search-style prompts.

That's a problem, because 60% of AI prompts are ten words or longer, and most marketing tools are not tracking them.

Ethan Smith, whose growth agency works with Netflix, OpenAI, Masterclass, Adobe, and Meta, has spent the last year mapping what actually gets cited in ChatGPT, Claude, and Gemini.

His core finding: AEO is almost entirely a long-tail game, and almost nobody is playing it the right way.

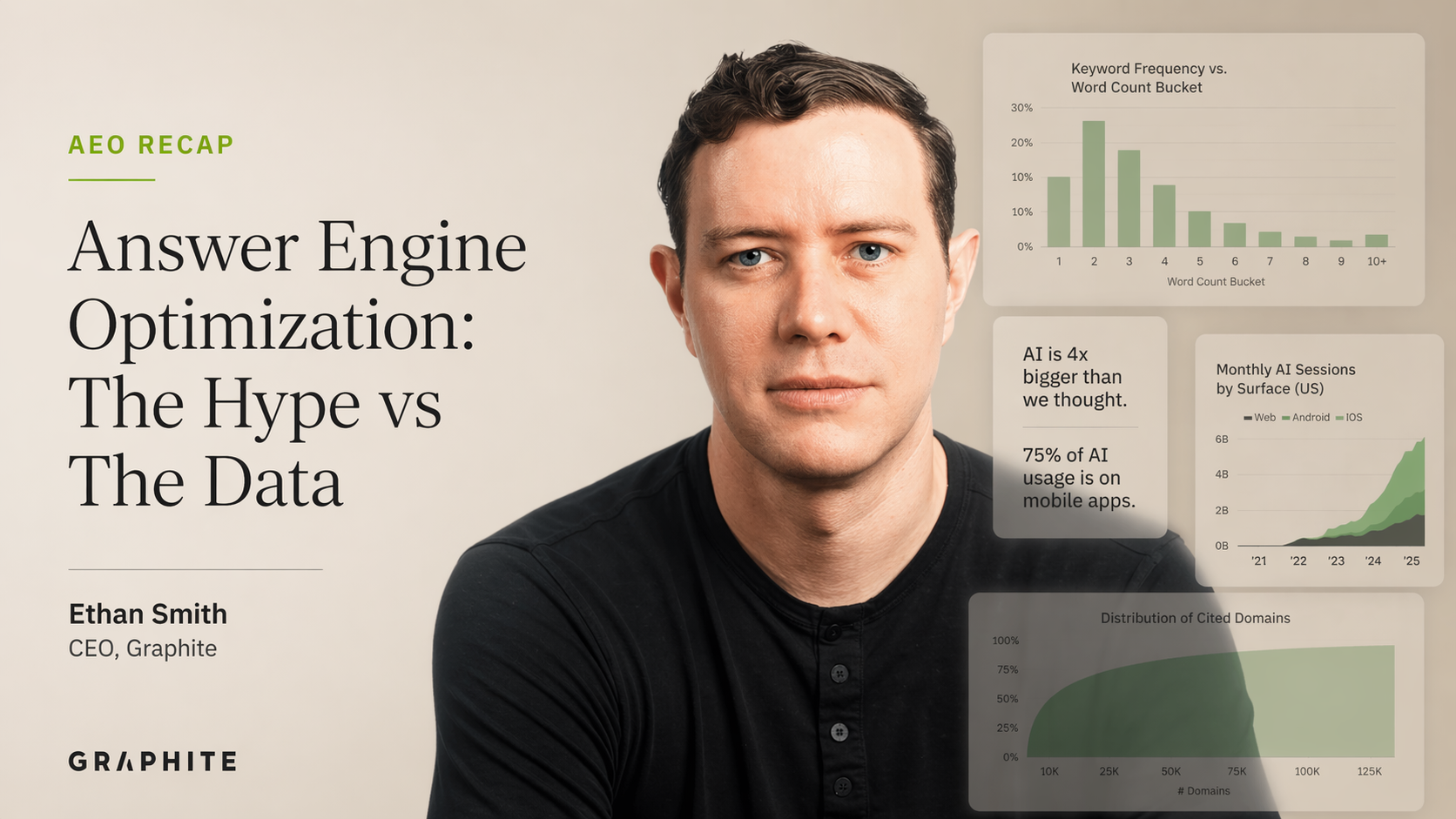

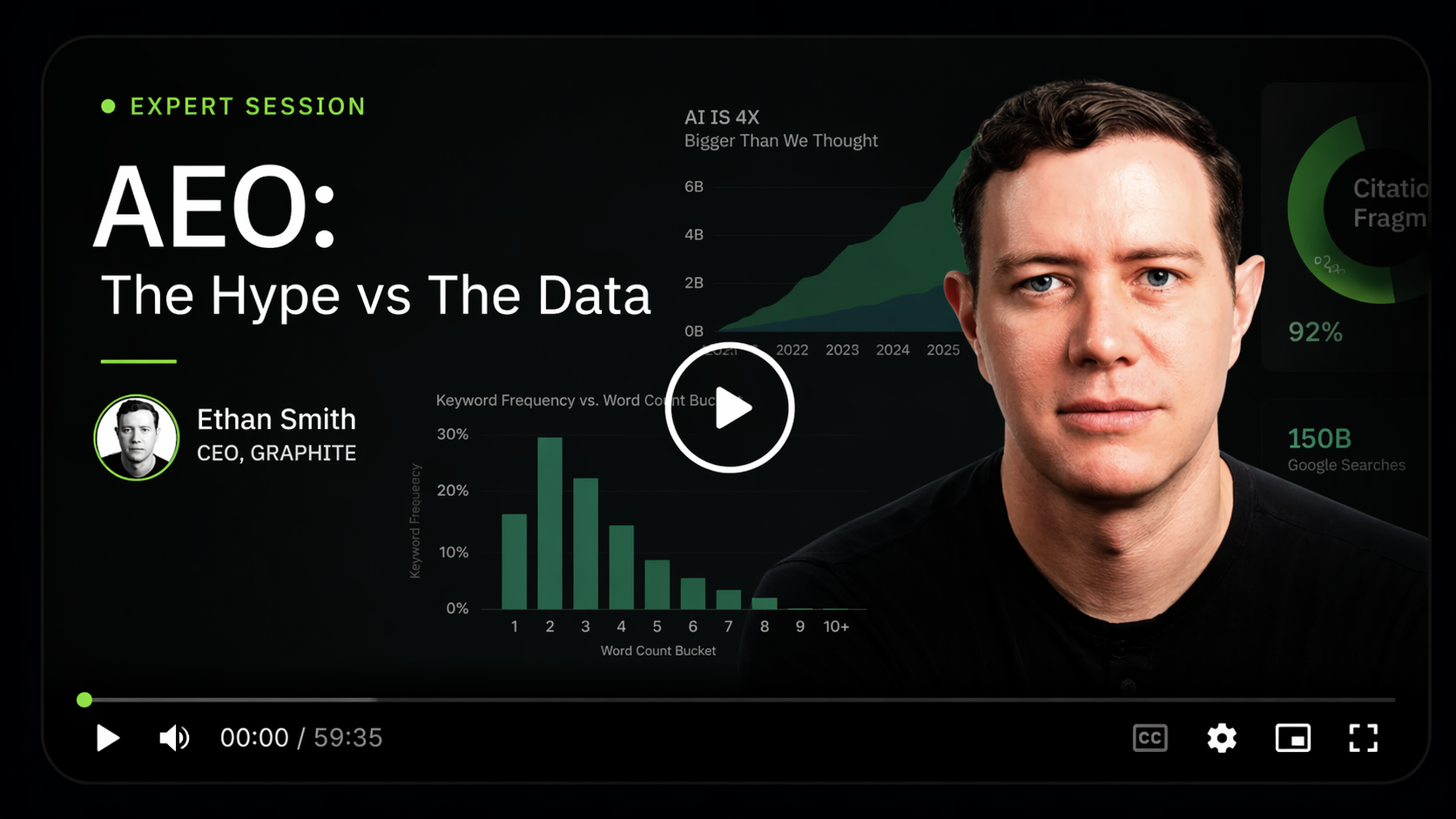

AI usage is four to five times larger than most reports say, and search isn't shrinking

Smith's recent research on AI usage shows the AI market is four to five times larger than published reports suggest.

"Most of the stuff published about AI is only on web, but the majority of the usage is actually on mobile apps," he explained. In the US the dominant app is iOS; outside the US, it's mostly Android.

The other piece of conventional wisdom that does not survive the data: search is not going down:

"Whenever a new category opens up, another category might go down. But in this case, it's not going down."

The parallel he keeps coming back to is mobile apps: when they launched, people said the web would die. It didn't. People just used technology more.

Total combined usage across search engines and AI is up 26% worldwide since ChatGPT launched.

The pie is getting bigger, not shifting.

Answer Engine Optimisation is almost entirely a long-tail game

The single most important insight Smith shared: the query distributions for search and AI are inverses of each other.

In search today, only about 4% of queries are ten words or longer. The long tail, in his words, "in some sense, doesn't even exist in search anymore." In AI, the opposite is true: 60% of prompts are ten words or longer. "It's the inverse distribution. Long-tail answer engine optimization is essentially almost all that answer engine optimisation is."

Why?

Because users can now prompt for things too specific or complex to search for. That's also why the pie is growing: a new set of use cases has opened up that didn't exist before.

The problem is that most marketers are still tracking short, head-term prompts.

"Marketers are not changing," Smith said. "Most of what people are looking at are not representative. They are short search-related prompts."

The opportunity is exactly where it was in the early days of long-tail SEO: if you have the data and others don't, you can exploit it.

Find real prompts in primary sources, not from ChatGPT itself

If the long tail is where almost all AEO opportunity sits, the next question is how to find the real prompts. Smith's answer: don't ask AI to generate them.

"If you ask ChatGPT, guess what the tail is? The answers are very relevant and zero volume," he said. "It's the same with search. If you say, 'suggest some search keywords,' they're really relevant keywords with no volume." Good ideas, no real basis.

Instead, go where the conversation actually happens. Smith demonstrated this with Rippling: he prompted Gemini to pull real Reddit threads about the company, sorted by upvotes, and got a table of questions people were actively asking. "Rippling versus Deel. How bad is the support once implementation is over?" These are questions other people are controlling the narrative on, and they map directly to pages Rippling could build.

The same logic applies to internal data.

"Do you have a Slack channel? Do you have customer support? Do you have sales calls? Go through and mine. What are the questions people are asking about me? That's what's in the tail," Smith said. He described using Claude's Cowork feature with a Slack connector to run exactly this kind of analysis on his own client projects.

AI summarises consensus, so you need to be cited many times across many sites

One of the most counterintuitive things Smith showed was a query for "what's the best website builder for designers" in ChatGPT. Webflow came up first in the AI's answer. But when he looked at the actual sources the AI had pulled, Webflow wasn't even listed.

"AI is summarising consensus," Smith said. "So it's not rank once or rank best. It's rank many times, as many times as possible."

Webflow wins in AI because it's mentioned everywhere else, not because it ranks first in any single result. That reshapes the whole strategy.

"The more people who are mentioning your brand or your product, the better. As much as possible." When asked whether this makes AEO significantly more work than SEO ever was, Smith didn't hesitate: "It's more complex than SEO because SEO is just my site. This is now off-site, and so many off-sites."

The citation mix is also more fragmented than most people realize.

Reddit and YouTube get most of the attention in AEO discussions, but Reddit is only 2.36% of all cited domains and YouTube is 1.65%. "You can't just have a Reddit and YouTube strategy and then be done with it. You need to have a wide net strategy," which Smith described as PR, affiliates, viral content, and content syndication stacked together.

You can measure AI: everything is a probability distribution

A common objection to AEO is that AI is too unpredictable to measure. Smith rejects this framing directly.

"It's kind of like if somebody said, 'I'm going to have a son. How tall is he going to be?' And you're like, 'Well, every human is totally unique. So you could never know.' That's not true. Humans generally are 5'5 to 6'5. There's a probability distribution despite the fact that every human is a unique snowflake."

Every AI answer is a unique snowflake, but the distribution is stable. Smith walked through a study where his team asked ChatGPT for the best flavour of ice cream thousands of times. Vanilla came up most often. Chocolate was second. Some answers, like Thai tea, showed up about 4% of the time. "You can measure AI with probability distributions," he said.

The same principle applies to brand citations. You measure how often you appear and in what position, across many prompt runs. Ranking in AI looks less like a single static position and more like a weather forecast: predictable in aggregate, variable in any single instance.